Dr. Martin Jan Stránský, of Czech origin but also holding American citizenship, is a man of multiple passions and achievements: a respected neurologist, clinical assistant professor of neurology at Yale University, a wine producer, and the author of the book The Rise and Fall of the Human Mind. His core belief, “we are our brains,” lies at the foundation of all his work. Through his writings, podcasts, and captivating speeches, Dr. Stránský manages to transform the complexity of neuroscience into clear and accessible ideas, using metaphors and humor to explain how our brain shapes our identity. His unique ability to combine scientific rigor with oratory has made him a sought-after and compelling voice in the field, offering profound insights on topics ranging from neuroplasticity to the social challenges of modern life. In this exclusive interview for Q Magazine, Martin J. Stránský helps us better understand the most fascinating organ of the human body, the brain, and how it is deteriorating under the assault of digitalization in the age of technology, warning that Artificial Intelligence will ultimately lead to the destruction of humanity.

- Procurorii Gigi Ștefan și Teodor Niță, arestați pentru 30 de zile. Care sunt acuzațiile

- Nicușor Dan, împreună cu fiul său la BSDA 2026. „Nu aș vrea să numesc un Guvern care să nască o altă criză”

- Ofițerul Ileana Denisa Știrbulescu, „cea mai rapidă polițistă din România”, a câștigat aurul pe cinci kilometri la Olimpiada Mondială a Polițiștilor

- PSD, întâiul chemat

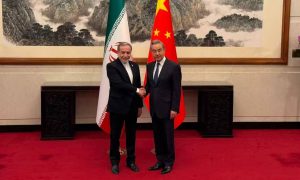

- Miza unei întâlniri care poate schimba fața lumii

DIGITALISATION, A WEAPON OF DESTRUCTION OR PROGRESS

My first question is related to the COVID period, where your perspective was somewhat different from others discussing the issue.Do you think it’s possible to form a united front in neuroscience against the digital threats to our future? Or do you believe that differing opinions will make it difficult to reach a common stance?

I think the medical community, like the rest of the world, has become highly globalized. When it comes to the effects of technology, particularly digital technology, the neuroscience community, much like scientists in general, has often been at the forefront. This applies both in terms of ethical considerations and the timing of discussions around the negative impacts of digital communication.

For humanity, emotions have always played a greater role than facts and this has been true for thousands of years.

We can still see ongoing debates over issues that should be well-settled, such as the effectiveness of vaccinations.

It will take time to see whether neuroscience and science in general gains more widespread respect.

We can look at climate change as an example. Scientists and politicians began presenting clear evidence 20 to 25 years ago, yet many people, including world leaders, still reject it. We can also observe the leaders being elected in the most powerful country in the world and their attitudes toward scientific facts.

When it comes to digital technology, neuroscience is largely unified in its concerns.

Interestingly, many of the very individuals who developed digital technology and artificial intelligence have signed petitions calling for their limitations.

Bill Gates and Steve Jobs did not allow their children to use digital technology at home. Similarly, Elon Musk, Sam Altman, and other pioneers of artificial intelligence have supported multiple petitions—at least three—warning about its dangers. Some of these petitions have been signed by thousands of computer engineers and scientists, stating that AI represents, and I quote, “the greatest threat to human extinction.”

Yet, despite these warnings, we continue to push forward.

Dr. Martin Jan Stránský holds Board Certification in Neurology from the American Board of Psychiatry and Neurology; Specialized Training Certification and Diploma in Neurology from the Czech Republic; Board Certification from the American Board of Internal Medicine; Medical License in the Czech Republic; and a Fellowship from the American College of Physicians. He is a member of the American Board of Internal Medicine, the American Academy of Neurology, the American College of Physicians, and the Czech Medical Chamber. He is licensed to practice in the United States (CT), the Czech Republic (EU), and Grenada W.I. He is also a member of the Czech Medical Association Jan E. Purkyně: Neurological Society, Neuroscience Society, Society of Social Pediatrics and Pediatric Psychology, Society of Social Medicine, and an honored member of Sigma Xi, an international honor society for research.

When do you think artificial intelligence will reach its full potential?

Artificial intelligence has already arrived. It has been with us for quite some time. The real question is: how do we define AI? Is it just a toaster that turns on at the sound of your voice? Is a GPS considered artificial intelligence? To some extent, yes.

Even the creators of AI may not have a fully satisfactory definition. But perhaps the best one is this: AI is a computer program that, by design, doesn’t truly understand what it’s doing.

Artificial intelligence programs are designed without predetermined algorithms, meaning they operate without fully understanding what they are doing. This allows them to generate completely new and unpredictable outcomes. That process is already happening.

There are many other intriguing and concerning aspects of AI. However, many far more influential and well-known figures than myself have warned that AI will ultimately lead to humanity’s destruction. I am absolutely convinced of this because, historically, whenever a biological life form begins transferring its genetic and intellectual potential to a machine, it marks a critical turning point—and that is precisely what we are witnessing.

It’s frightening!

Yes, it is. We’ve reached a pivotal moment in our evolutionary history, which has been unfolding for 400,000 years.

Yet, in just 25 years, we have created a disastrous scenario. If this trajectory continues, it will inevitably lead to, at the very least, a reset—when something significant will happen.

A significant number of people will likely suffer, and possibly die, leading to a realignment of our priorities. I’m not saying this as a religious fanatic or a conspiracy theorist. I’m saying this as one of many scientists who base their conclusions on a simple, easily understandable fact: we are sophisticated biological animals.

Everything we do, every event that occurs in our society and in our world, impacts us as biological beings and influences our genes. This will always hold true as long as we exist.

Therefore, the moment you prioritize comfort and laziness over living with positive stress as a biological organism, you place the future of that organism in serious jeopardy.

The more we simplify our lives, the more we become enslaved and dependent on those very conveniences.

Yet, we remain biological organisms. We continue to produce and pass on our genes according to natural laws – laws that technology can never surpass or override, I should say.

Some milestones in Dr. Martin Jan Stránský’s biography include: 1986-1989: Resident and Chief Resident – Department of Neurology, Albany Medical Center, Albany, NY; 1986-1988: Internship and Residency – Department of Internal Medicine, Coney Island Hospital (SUNY), Brooklyn; 1985: Elective Course in Neurology, Guy’s Hospital and National Neurological Institute, London; 1983: Doctor of Medicine, St. George’s University School of Medicine, Grenada, W.I.; 1978: Bachelor of Arts in Art History, Columbia University, New York, N.Y.

WHO WAS FIRST: GOD OR MAN?

Dr. Stransky, in your book, you draw a connection between intelligence and believers versus non-believers. I find this idea both intriguing and a bit confusing. Could you please elaborate on this concept and clarify your perspective? Are you implying that non-believers are more intelligent than believers, or there is a different nuance to your argument?

Then how do you explain the odyssey of the Jewish people, generally regarded as one of the most intelligent communities in history, at least in terms of having produced the most Nobel prize winners, and who have lived for two thousand years in exile, but who have preserved their unity and identity through their shared faith in the sacred texts, while also preserving, also through faith, the hope of returning to Jerusalem. As you know, Psalm 137, precisely evoked to remind every Jew ‘If I forget thee, O Jerusalem, let my righteousness forget her honour!’ was a source of strength and resistance for these people. Along, of course, with the Torah and the Talmud.

How can religion, in this case, be seen as a limiting factor for intelligence when it has clearly played a central role in preserving and advancing their intellectual and cultural legacy?

What I said is that when you examine non-believers compared to the general population, atheists, as a group, tend to score higher on measures of intelligence. This suggests that individuals with higher cognitive abilities, as measured by today’s standards, often critically evaluate ideas and may not necessarily conclude that God exists or that they need a God in their lives. That’s the point I made. It does not mean that believers are unintelligent or that non-believers are inherently smarter.

There are countless brilliant individuals who are deeply religious, who firmly believe in God, and who credit their faith for guiding them in their careers, families, and personal fulfillment. Their belief brings them great satisfaction and meaning, and that is entirely valid.

My observation is not about labelling believers as less intelligent but rather about noting a correlation between higher intelligence and a tendency to question or reject religious beliefs. It’s a nuanced distinction, and I fully acknowledge that intelligence and belief are not mutually exclusive.

How do you explain someone’s belief?

The issue here is one of profound philosophical interest, as well as scientific relevance. From a scientific perspective, we know that the brain learns solely through comparison. For example, when a young child touches an electrical socket and gets an electric shock, they quickly learn it’s not a good idea and avoid doing it again. This is because they now have a reference point for comparison.

Similarly, when you speak with me today, you’ll inevitably compare this conversation to other interviews you’ve conducted. Both consciously and subconsciously, your work will be shaped by the impressions and insights you gather from this interaction.I will compare my discussion with you to other conversations I’ve had.

This is how our minds function, because the brain is wired to make connections only when something new or different occurs. Otherwise, it doesn’t register much.

The challenge—or the fascinating solution—arises when something happens that we can’t explain. Even today, there are many things I don’t fully understand, but imagine early humans observing natural phenomena like lightning. They had no way of explaining where lightning came from.

So, what does the brain do in such situations? It creates an explanation, even if that explanation isn’t grounded in reality. This act of creating an explanation provides a sense of comfort and a way to make sense of the unknown. For early humans, the explanation was that there were many gods controlling these forces. This is how belief systems often emerge—as a way to fill the gaps in our understanding and bring order to the chaos of the unknown.

Currently, he is a Clinical Assistant Professor, Department of Neurology, Yale University School of Medicine; Director, Yale University – Charles University Neuroscience Exchange Program; and Reviewer for Yale University, NIH/FIC Global Health Scholars Program.

The Jewish faith, by the way, was the first to establish monotheism, which became one of its greatest strengths. Monotheism was developed by the Jewish people, as both a neurological and psychological tool, to aid their survival. In a world dominated by pantheism and polytheism, they envisioned a God who was exclusively theirs—a God with whom they made a covenant. This God provided them with clear rules, metaphorically written in stone, and promised protection and guidance through their trials and tribulations if they adhered to these rules. This framework not only gave them a unique identity but also a sense of purpose, unity, and resilience in the face of adversity.

So, you can see how the cultural and political circumstances of a given time shape human reactions to certain situations. Before the Jewish people established monotheism, humans created many gods—often strange and varied. For example, I lived in India for several years and observed the rich diversity of deities in Hinduism, such as gods with six hands or elephant trunks. These representations reflect the human imagination and the need to make sense of the world through symbolic figures.

The fundamental question, then, is: Did God exist before the human brain existed? My answer, as a neuroscientist, is no.

Belief in God or gods is a product of the human brain’s ability to create explanations, narratives, and symbols to navigate the complexities of existence. Without the human brain, the concept of God would not exist. This perspective doesn’t diminish the value or meaning of faith but rather highlights its origins in our cognitive and psychological processes.

Therefore, God is a creation of the human brain. God did not create man, man created God! Personally, I doubt this chronology, and recognised scientists have also doubted it, among whom I would mention in passing Francis Bacon, Johann Kepler, who saw the Creator as so intelligent that he sought logic in everything, rejecting a chaotic universe, and to whom I might even say that we owe the replacement of superstition by reason, not to mention Isaac Newton for whom, you remember, the Supreme God is an eternal, infinite, absolutely perfect being… and we could go on.

Yes, God is a creation of the human mind.

I consider myself an agnostic—I don’t focus on the question of God’s being. I’m not an atheist because I believe in the importance of moral principles and, in a metaphysical sense, I think these principles are connected to higher, universal truths. This perspective is part of my work in neurophilosophy. I don’t seek a label or align myself with any particular religion because, to me, religion is… Well, I once had dinner with Salman Rushdie, and he said that religion is the bane of mankind. His point was that as soon as you codify belief—whether God exists or not is a separate question—the moment you start dividing people by saying “my God versus your God,” it becomes politics. And that’s where the trouble begins.

So, the first question is: Did God exist before the human brain existed? My answer, as a neuroscientist, is no—God is a construct of the human mind.

The second point, however, is that belief, in any form, can inspire people to achieve extraordinary things.

This isn’t to say that belief should be discouraged; rather, it highlights the power of belief as a motivator and a source of meaning, regardless of its objective truth. Belief, whether in God, principles, or ideals, can drive individuals and communities to accomplish remarkable feats.

To me, the concept of God is entirely explainable from a neurological perspective. It arises from the human brain’s need to create meaning and structure. However, once this concept is formalized into religion, it becomes codified belief—and codified belief is essentially a form of politics, shaped by societal norms and cultural influences.

Ultimately, and even philosophically, the question of God’s existence boils down to the broader debate about truth. If a claim—such as the existence of God—cannot be supported by clear, verifiable scientific evidence, then it can also be dismissed on the same grounds. This doesn’t negate the personal or cultural significance of belief, but it underscores the importance of evidence and critical thinking in discussions about truth and reality.

To me, the debate about God ultimately ends in a tie—it’s like a football match that finishes one-one. As a result, I tend to set the question aside. However, as a neurologist, I understand the mechanisms behind our need for explanations and beliefs. Personally, I don’t hold any strong position for or against the existence of God. But I do have significant concerns, both personally and scientifically, with organized religion.

I was christened as a Catholic, and I view the Catholic Church as one of the most dangerous institutions of its kind, not only historically but even today.

My issue lies not with individual faith or spirituality but with the structures and power dynamics of organized religion, which have often been used to control, manipulate, and harm rather than to uplift and inspire.

So, I would have a much stronger disagreement with someone who tries to convince me of the benefits of organized religion than with someone like you, who argues for the personal benefits of belief in God. I fully support belief as long as it strengthens an individual’s character and doesn’t impose on or undermine someone else’s beliefs. That’s how the human mind operates—your belief in God is as real and meaningful to you as my belief in not sticking a finger into an electrical socket is to me. And that’s perfectly fine. I respect your perspective and agree that belief, when it serves to uplift and empower, has its place.

Martin J. Stránský is currently an international lecturer on the negative effects of modern technology on the human brain and society; Founder and Director, Policlinic at Národní, Prague, and Private Neurological Associates, Prague; Visiting Professor in Physical Diagnosis and Neurosciences, St. George’s University School of Medicine; Founder and Director of the Prague Selective Program, the largest summer program for medical students in the world; Neurologist at St. George’s General Hospital, Grenada, W.I.; and Physician for the Embassies of the United States, Great Britain, Germany, and the Czech Republic.

Can faith influence one’s well-being, one’s life?

J.S: Interestingly, as physicians, we have access to compelling studies that show believers—particularly those who pray, even if not fervently—tend to cope better with critical illness. I’ve observed this firsthand with patients in intensive care units.

Several studies support the idea that faith and prayer can provide psychological and emotional resilience, which may contribute to better outcomes during health crises.

This doesn’t necessarily prove the existence of God, but it highlights the tangible benefits of belief in helping individuals navigate extreme challenges.

We conducted a study at Yale that touches on the concept of mindfulness, but it also extends to the idea of being connected to a higher principle or believing in something beyond oneself—such as the belief that existence doesn’t simply end after death. The findings showed that individuals with these types of belief systems tend to perform 10 to 15% better in various measures of coping and resilience compared to those who lack such frameworks. This suggests that having a sense of purpose or connection to something greater can significantly enhance psychological well-being and overall functioning.

TECHNOLOGY: THE BRIGHT, THE DARK AND THE UGLY

In Romania, many parents and education experts argue that teaching children handwriting in school is an outdated and primitive practice. They believe that reading on screens is just as effective as reading from physical books. What are your thoughts on writing and reading on screens compared to traditional methods like handwriting and reading physical books? From the perspective of brain science, how do these approaches differ, and what impact do they have on learning and cognitive development?

Well, as it is the case with just about everything discussed in the book, this isn’t about what I think—it’s about the facts. These individuals are entitled to their opinions, but they’re not entitled to their own facts. The reality, supported by every scientific study, is quite clear: these people are simply wrong. The evidence overwhelmingly contradicts their claims.

There’s a reason why some people hold this belief, and it stems from the direction modern society has taken, where technology is often seen as the ultimate solution. However, at the neurological, academic, and educational levels, every single study has demonstrated that reading from paper is more effective than reading from a screen, and that writing by hand is superior to typing on a keyboard. This isn’t a matter of opinion—it’s a fact. I would challenge anyone to find a single study that proves otherwise, because such studies simply don’t exist.

In fact, research has gone as far as dividing the same class of students into two groups, taught by the same teacher and following the same curriculum. One group learned using computers, while the other group printed the material and studied from paper. The results consistently showed that the group using paper achieved significantly higher standardized test scores than the group relying on screens. This highlights the clear advantages of traditional methods in enhancing learning and cognitive performance.

Is there an explanation to this?

There are several neurological reasons for this. First and foremost, it has to do with evolution and how our brains are wired. We don’t read by processing individual letters; instead, we recognize patterns on a page. When we try to recall what we’ve read, our brains work in a specific way: they first search for the location on the page where the pattern was, then they attempt to recall the word. This process is similar to how someone might search for a book in a library. They first look for the section (the pattern), then the specific book, pull it out, open it, and read the contents.

This spatial and pattern-based approach to reading and memory is deeply ingrained in our brain’s functioning. When we read from paper, we engage this natural process more effectively than when we read from screens, which often lack the same spatial and tactile cues. This is one reason why traditional methods like reading from paper and writing by hand are more effective for learning and retention—they align with the way our brains have evolved to process and store information.

When something is on paper, it’s framed—physically and spatially. This framing helps the brain process and retain information more effectively compared to reading on a computer, where you’re scrolling up and down. Even though digital text may be divided into pages, the act of scrolling disrupts the brain’s natural ability to anchor information spatially. On paper, you use your hands to turn pages or move sheets, which creates a tactile and spatial connection to the content.

This is the second reason why traditional methods are more effective: evolutionarily, our brains are designed to handle separate pieces of information as distinct entities, making them easier to locate and recall. By keeping information physically separate—like turning pages in a book—you’re leveraging the brain’s natural organizational systems, which scrolling on a screen simply cannot replicate.

Just like when children learn to add numbers in mathematics, they often use their fingers, looking at their hands and the numbers simultaneously to reinforce their understanding. These are simple evolutionary mechanisms that demonstrate why holding and reading from paper is far more effective than reading from a screen.

The same principle applies to writing. When you write by hand, you feel the paper beneath your hand, which gives you a sense of space, time, and movement. This tactile engagement stimulates your brain to think in a more complex and integrated way—not necessarily harder, but more deeply and holistically.

Writing by hand activates multiple areas of the brain, enhancing memory, creativity, and comprehension in ways that typing on a keyboard simply cannot replicate.

These natural, evolutionary processes highlight the enduring value of traditional methods in learning and cognitive development.

When you write by hand, it’s a bit slower, which is precisely why it tends to produce more thoughtful and intelligent results compared to typing. You can test this yourself right now. Imagine you have a pen in your hand and write the letter b, as in “boy.” Go ahead and do this slowly. Now, write the letter v, as in “Victor.” Pay attention to how it feels to write the b—notice the movement of your fingers and the tactile feedback. Now, do the same for the v.

Next, put the pen and paper aside and type the letters b and v on a keyboard. Click, click. You’ll notice that there’s no difference in the movement or sensation—it’s just the same action for both letters. This lack of differentiation in typing contrasts sharply with the distinct physical experience of writing by hand.

This simple exercise shows how much more your brain registers when you write by hand compared to typing. The same principle applies to the mental thinking process: writing and reading on paper engage your brain in a more complex and meaningful way. This is why students who write on paper or read from physical books tend to achieve better grades—they’re leveraging the brain’s natural, evolutionary mechanisms for learning and memory.

The third reason is that when you hold something in your hand—typically your right hand,since most of us hold objects with one or both hands—it activates pathways to the left brain, where the language center is located. This means two things are happening simultaneously: first, you’re processing the words, and second, the tactile experience of holding the material reinforces the learning in a separate part of your brain. This dual activation enhances memory and comprehension.

This is also why young children, when learning to read from paper, often move their mouths as they read—they’re essentially talking to themselves. This self-verbalization is a natural way for them to reinforce what they’re reading, as they instinctively understand that combining physical, tactile, and verbal engagement helps them learn more effectively. This multi-sensory approach is deeply rooted in how our brains are wired and is far less pronounced when reading from screens.

So, anyone who claims that learning from a screen is better is simply and unequivocally wrong.

Over the years, Dr. Martin J. Stránský has served as an advisor to several heads of state, ministers, and local and international organizations on healthcare systems. He is a member of the Advisory Committee of the Senate Commission for Czechs Living Abroad, under the Senate of the Czech Republic. He advised President Petr Pavel and his team during the presidential campaign and is currently the Chief Personal Advisor to the First Lady of the Czech Republic, Eva Pavlová.

Interestingly, some education systems in various countries are now reconsidering the reliance on digital tools. They are exploring a return to classical methods, using digital tools in a supplemental way – perhaps limiting their use to about an hour a day – rather than making them the primary mode of instruction.

I think we need to understand what is the goal: to become digitally aware, digitally capable or digitally competent?

That question actually has two parts. The first part is about the role of school in general. In my view, the role of school boils down to two main things. First, it’s to teach a person—or a child—how to think, not necessarily to memorize facts.

For example, when I was growing up, we had to memorize things like the birth dates of kings, but today, we know that kind of information can easily be looked up. Instead, schools should focus on teaching how to think critically, solve problems, and create solutions. Second, schools should help children become socially adept—teaching them how to interact with others, whether it’s a child they like, a child who teases them, or just navigating relationships in general, because we are social beings.

Schools aren’t here to teach you how to use a computer; their purpose is much deeper than that.

The primary purpose of school is to teach you how to cultivate and utilize your mind effectively. While anyone can learn the basics of using a computer or delve into the realms of digital and artificial intelligence within a few days, the true challenge lies in mastering the most powerful „computer” of all—your brain. This „computer” is far more advanced, capable, and faster than even the largest supercomputers on Earth. That is the fundamental role of education: to help you unlock and harness the immense potential of your own mind.

Secondly, it’s crucial to recognize—and this is where we make a significant leap in understanding—that the digitization of society hasn’t truly improved anything. Instead, it has primarily made us lazier. It hasn’t contributed to longer life expectancy, enhanced intelligence, or greater happiness. It hasn’t reduced disease or, according to any study, increased illness. It hasn’t created wealth for the masses; rather, it has made a handful of individuals even wealthier.

In essence, the digitization of humanity has offered little more than increased laziness and comfort, without delivering meaningful progress or benefits to society as a whole.

This is not merely an opinion—it is a fact. I urge you and all your readers to send me any study that proves the opposite, so I can stop writing these books and traveling the world to lecture on this topic. The evidence is clear: according to all major happiness indexes, including a fascinating long-term study on human happiness that began at Harvard in 1938—tracking the same 3,000 families over generations—as well as similar studies that may have been conducted in your own country, human happiness has been steadily declining for three generations. The data speaks for itself, and it paints a troubling picture of our modern world.

We live in a society that is, in fact, more divisive than ever before.

While we’ve made significant technological progress, when we focus solely on digitization, it’s clear that we’ve created a society where every member is essentially forced to rely on technological products just to carry out basic daily tasks. This is particularly striking when we remember that we are, at our core, biological beings, not digital ones.

Today, it’s nearly impossible to open a bank account, book a flight, rent a car, make a purchase, or even pay for something without stepping into the digital world. I’m not sure about your country, but in many places, you can’t file a tax return, visit a city hall, or complete essential administrative tasks without navigating a digital application. This shift has fundamentally altered how we interact with the world, often leaving little room for those who struggle to adapt to this digital-first reality.

Why are we forcing 60- and 70-year-olds to become slaves to the digital world when all they want is to pick up a pencil and fill out forms on paper?

I have nothing against digitization itself, but from both a neurological and evolutionary perspective, there should be no activity on Earth that cannot be accomplished either face-to-face or on paper. Yet, in our modern society, we’re increasingly eliminating these options.

For example, in our country, the postal system is slowly disappearing, further pushing people into a digital framework that doesn’t always align with their needs or capabilities. This shift risks alienating those who are not comfortable or familiar with digital tools, creating unnecessary barriers in their daily lives.

Nobody writes letters anymore, but the opportunity to do so should still exist. You should have the chance to write a Christmas card, put a stamp on it, and send it to someone without the fear of mailboxes being taken away. Yet, about a month ago, the mailbox in my building was removed. This is a significant issue.

It’s not just about nostalgia—it’s about preserving basic, tangible ways of connecting with others in a world that’s increasingly digital and impersonal.

Removing these options limits our choices and disregards those who value or rely on traditional methods of communication.

The idea of pushing children to become digitally smart, in my view, is akin to handing a child a bottle of vodka and saying, “Here, learn to drink this,” rather than offering it as a choice. This represents a deeply troubling philosophical dilemma we’re facing. As you pointed out, many schools and parents still believe that the path forward is to abandon the biological principles that have shaped us as humans and simply disregard them. However, the critical issue is that there are no other principles that influence biology except biological principles themselves. By ignoring this, we risk losing touch with the very foundations that have allowed us to thrive as a species. We must be cautious not to sacrifice our biological essence in the pursuit of digital progress.

Where is humanity heading?

This brings us full circle to the very beginning of our conversation, where the overwhelming emphasis on technology and digitization signals what I believe to be the beginning of the end. That’s why I have significant concerns about introducing digital technology in schools before the age of 15 or 16. The focus during those formative years should be on training the brain, learning how to think critically, and embracing positive frustration. The most effective way students learn is by making mistakes, figuring out how to avoid repeating them, and then being challenged to make new mistakes. This process forces the brain to compare, adapt, and create solutions—essentially, it’s how the brain learns most efficiently.

Digital technology, on the other hand, often provides ready-made solutions, eliminating the need to think deeply or creatively. While it can offer answers, it doesn’t encourage the development of problem-solving skills or the ability to innovate. By prioritizing digital tools too early, we risk undermining the very cognitive processes that are essential for long-term growth and adaptability. The emphasis should remain on nurturing the brain’s natural capacity to learn, think, and evolve.

And this is precisely why we’re facing such significant issues with rising rates of depression, anxiety, declining test scores, and even lower IQs since the early 2000s—coinciding with the widespread introduction of digital technology in schools.

This shift is directly responsible for the fact that students today are not as intellectually capable as they were two decades ago. Again, this isn’t just an opinion; it’s a fact, and the evidence is clear.

In our country, the situation has deteriorated to such an extent that students must pass state exams to earn a so-called state degree. However, so many students were failing these exams that instead of reforming the education system to address the root causes, the authorities simply lowered the standards. Now, we have less capable students passing these exams, earning degrees, and applying for jobs that require those qualifications. But the real problem emerges when they start those jobs: they’re unable to perform. This creates a cycle of incompetence and frustration, undermining both the workforce and the credibility of our education system. It’s a stark reminder that lowering standards is not a solution—it’s a shortcut that ultimately harms everyone.

Not long ago, about six weeks back, the New York Times published an intriguing survey. They interviewed 600 HR managers from large corporations, and the results were quite revealing. Sixty percent of these managers admitted that they no longer offer interviews to recent college graduates. The reason? Within just three months of hiring, these young graduates often leave because they lack critical thinking, reasoning, and communication skills.

Despite holding degrees, these qualifications no longer seem to hold much value. As a result, if you’re under 25, many of these companies won’t even consider speaking with you. This trend is now prevalent in about two-thirds of corporate America.

In Romania, 45% of graduates are functionally illiterate. Just as you mentioned, they have diplomas but, as in the situation you described, these diplomas do not translate into practical skills or competences. In your book you emphasise that it’s not how much you know, but rather how you think. Are there solutions to change this trend in society?

I know this might sound depressing, but I’m simply presenting the facts—facts that very few people are talking about, though I must say the debate is gradually gaining momentum. Some countries are now starting to address the issue of digital use in schools, as well as social media and cell phone use among children.

For instance, Australia, as you’re probably aware, has already implemented a ban on social media for younger age groups. Similarly, the Irish Medical Association has proposed banning cell phones for children, a move I fully support. I’ve always been someone who leans toward more forward-thinking, and perhaps even controversial, proposals when it comes to these matters.

Based on what we’re learning more and more, I’m now proposing something radical: a ban on cell phones for all of humanity. The reason is simple—cell phones contribute very little to real value, and they’ve become the catalyst for widespread laziness and the erosion of societal bonds.

I believe it’s perfectly reasonable to keep laptops and computers, as they serve practical purposes. However, unlike cell phones, it’s much harder to casually open a laptop on the metro or in public spaces. Without cell phones, people might start reading books again or even engage in conversations with one another, much like we did when we were children, instead of mindlessly staring at their screens.

WHAT DOES PERFECTION LOOK LIKE?

I read that you recommend training the brain to keep it active, especially for children, by learning a musical instrument or studying a new language. I have to admit that I took up the piano at the age of 47, even before reading you.Is the brain of someone who listens to classical music different from the brain of someone who listens to hip-hop, for example?

No, there may be subtle differences, but both brains—those that listen to classical music and those that listen to hip-hop—are significantly different from the brain of someone who doesn’t listen to music at all. Music, more than almost any other activity, activates more areas of the brain simultaneously than practically anything else we do.

Music, more than almost any other activity, activates more areas of the brain simultaneously than anything else we do.

Of course, it engages hearing, but it also sparks imagination and evokes deep emotions. While I’m not a huge fan of hip-hop now, I used to enjoy it once upon a time.

It’s that feeling and imagination evoked by music that allows the brain to tap into its vast library of memories and emotions. This is why most of us who listen to music—any kind of music—find it so enjoyable. Music has a unique ability to evoke feelings, emotions, and even memories or smells in a way that reading simply cannot.

That’s why music is so powerful, and I’m delighted to hear that you’ve taken up the piano. I started learning the Scottish bagpipes about fifteen years ago, and while it was an interesting experience, the piano is far better—mostly because it’s not as loud. The bagpipes are incredibly loud, and when you’re still learning, it sounds like you’re torturing a sheep. Unsurprisingly, anyone nearby tends to leave the room!

I believe all music activates both hemispheres of the brain, but the piano holds a special place. I was raised playing the piano because my mother was European, and she insisted on it. The piano is an excellent instrument to learn—it’s like the father or the center of the musical world.

If you play the piano, you gain a deeper understanding of all types of music. It provides a foundation that connects you to everything else in the world of music.

WHAT MAKES US HAPPY

Is there an ideal human being? If yes, how would you describe such a person? Also, if tomorrow you were to become a father, how would you raise your children in today’s world to help them grow closer to your vision of the ideal human being?

I’ve never been asked this question before. First and foremost, I believe it’s crucial to recognize that there is no such thing as an ideal human being. Many of the psychological challenges we face stem from the pursuit of perfection, particularly in the context of parenting. Mothers, for instance, often strive to raise perfect children, which can create unrealistic expectations and contribute to various issues.

This is where much of this begins. There’s no such thing as a perfect child, nor is there such a thing as a perfect person.

In my view, the ideal person is someone who has a deep psychological understanding of themselves, regardless of who they are or what they’re like.

Such a person embodies two key qualities.First, they are independent in the sense that, over time, they come to realize that true happiness stems from freedom—freedom from the grip of thoughts, from material possessions, and from external dependencies.

Second, they see themselves as a unique yet self-contained psychological entity, one that is, to a certain degree, resilient and capable of navigating life’s experiences without being overwhelmed by them.

The second essential quality is respect. Without leaning into religious connotations, I believe the well-known saying, „Do unto others as you would have them do unto you”, encapsulates this principle perfectly. To me, this is the foundation of morality.

I think that, in its essence, this is more than sufficient. Everything else is essentially an extension or variation of this idea.

When it comes to raising children, I would focus on nothing more and nothing less than nurturing these two traits: independence and respect.

To add a final thought, while this is my personal perspective, if we turn to science, the ultimate goal is to raise individuals who are happy. After all, human happiness is perhaps the most cherished of all emotions

You can be happy without being in love, although love is, of course, a wonderful and enriching emotion. However, it’s not just my opinion—studies have shown that human happiness is fundamentally tied to one thing alone. It’s not determined by wealth, intelligence, status, or even personality.

Instead, happiness is rooted in the number of genuine, biological, and social connections you have, ideally between three and seven close relationships.

The happiest people in the world—whether they live in a slum or a penthouse—are those who have a real, human support network around them. I’m not talking about social media connections, but rather face-to-face, meaningful relationships with others.

With this in mind, I would want to ensure that my children grow up cultivating real-life friendships and forming authentic partnerships. These connections, more than anything else, will be the foundation of their happiness

When I was younger, I was deeply impressed by intelligence. Now that I’m older, what truly stands out to me is kindness. What about you, what qualities in people impress you the most?

I think I’m always impressed when I meet someone who is, shall I say, truly grounded within themselves. It’s someone who understands themselves deeply and exudes a sense of inner strength, consistency, and even a certain degree of solitude.

When such a person is well-rounded and possesses a unique perspective, they impress me even more, as they become a role model. Throughout my life, I’ve been fortunate to meet a few individuals who served as immense role models and profoundly influenced me.

However, I feel that this concept of role models has largely disappeared from modern thinking—or at least, it’s been distorted. Today, people are rarely upheld as role models for their character or achievements. Instead, if they are celebrated, it’s often based on their popularity or the number of followers they have, rather than what they’ve accomplished or the wisdom they embody. This shift, in my view, is deeply concerning and represents a significant loss in how we value and inspire one another.

The most glaring example of this is what I consider one of the worst creations in human history: THE INFLUENCER.

To be an influencer, you have to be, at the very least, unconventional—and at the very most, completely outlandish, often to the point of being psychologically unhinged. The entire concept revolves around attracting attention, not for any meaningful contribution or achievement, but rather for what I’d call a ’’freak value”. It’s about standing out for the sake of standing out, rather than for anything you’ve actually done to benefit others or society.

The idea of a professor, priest, teacher, or even a parent serving as a role model—someone to look up to—has, over the last few generations, taken a significant turn for the worse. I believe this shift has been to our detriment.

This is also because in today’s society, equalisation and inclusion have made non-values equal to values.

Indeed, this decline is linked to another worrying trend in today’s digital society: the emphasis on equality at the expense of talent. In our attempt to level the playing field, we have inadvertently diminished the value of exceptional ability, wisdom and achievement. This, I fear, has led to a culture in which mediocrity is often celebrated and true role models become increasingly rare.

This is another biological and evolutionary dilemma.

Mankind has progressed on the principle of competition, not on the idea that each individual in our species should be identical to the others.

Of course, equality is essential in certain areas—such as under the law, in the status of men and women (or rather, ensuring women are equal to men), and in other fundamental rights. However, we don’t need to accept or elevate individuals solely because they are different. While they deserve respect, that doesn’t mean we must give them opportunities—like jobs—simply because they come from a historically discriminated background.

Similarly, if one person earns an A in school and another earns a C, the person with the A should rightfully be considered for the job. It doesn’t serve anyone to tell the person with a C, ‘You tried hard, and that’s just as good as the person who got an A.’ Merit matters, and pretending otherwise undermines both individual achievement and societal progress.

Effort is important—it’s the starting point—but ultimately, results must also matter. This is another significant issue we’ve stumbled into, partly due to the rise of the digitalized world and the influence of liberalism and neoliberalism that began to shape politics in the late sixties and seventies. Today, this is reflected in the political leaders we see and the broader cultural trends.

There’s now a growing movement to reclaim our individual identities and values, which is a positive shift. However, I realize I’m straying from the original question, but perhaps this is something worth incorporating into the discussion as well. So, yes.

Mediocrity is often celebrated.

Do you think mankind will achieve immortality?

Oh, I hope not! Biologically, I believe it’s impossible. Whether we like it or not, our primary purpose in life is clear: to have children, pass on our genes, educate them, allow them to learn from us, and then step aside.

That’s why we’re here. Everything else—hobbies, achievements, experiences—is just dessert. It’s the gravy, the cherry on top. But at the core, our fundamental reason for existence is rooted in that biological imperative

We’re here to pass on our genes. There’s a wonderful book by Richard Dawkins called ’’The Selfish Gene” that I highly recommend. Personally, I’m a romantic, and I don’t like to think of myself as merely a packet of genes with two legs and a brain.

But again, that’s the icing on the cake. Over time, we’ve developed value systems to add meaning to our existence. However, at their core, all these value systems—no matter how complex or profound—are ultimately here to help our genes survive in a better position than before. Or at least, that’s what they’re supposed to do.

Of course, our discussion today really highlights how these value systems have been transplanted onto a completely different playing field—one that’s no longer purely biological. Instead, it’s a technological playing field, and that changes everything.

I won’t ask if you’re a happy person, since we’ve already established that happiness is somewhat intangible. Instead, I’ll ask this: Do you feel fulfilled within yourself—deep down, in your interior? Are you at peace with who you are?

I have to say, I feel completely fulfilled. I consider myself extremely lucky. When I reflect on what I’ve said, what I’ve written, and what I’ve accomplished, I realize how fortunate I am. First, I’m still here at my age—that in itself is a gift. Second, I have three children and two grandchildren, which brings me immense joy.

Third, I’ve compiled my thoughts into a book—my second one—and I’ve been writing quite a bit. Seeing my work reach others satisfies the exhibitionist part of my character.

I’ve done almost everything I wanted to do and seen almost everything I wanted to see. Now, I’m turning inward, using everything I’ve learned to explore and apply philosophical principles as a neurophilosopher.

So, yes, I’m extremely content. The only thing that still sparks my curiosity is whether I’m right about the ideas I’ve written—particularly about the dying brain and what happens to us after we return to where we came from

Although the idea of what comes after life may be frightening to some—and I admit, it scares me too—I’m not in a rush to experience it tomorrow. I’m hoping to stick around a bit longer, perhaps even visit you in Romania someday.

But yes, I feel incredibly fortunate. I’m very, very lucky. I also have to say, as a general observation, that I think I’m probably a generation ahead of you. However, I believe our two generations, in perspective, are among the best in the history of humankind.

When we were born, there were only a handful of antibiotics, travel was limited, and in Europe, we were living under communism, among other challenges. Life was much more difficult. Yet, within our lifetimes, we’ve seen the human lifespan increase dramatically.

Today, we can get up and travel to almost any country we want. We can see and read anything we desire. The opportunities available to us are unprecedented. We’ve even put a man on the moon. It’s truly remarkable how much the world has transformed during our time

We’ve used our imagination to create incredible products and advancements. Over the last two hundred years, since the Industrial Revolution, progress has surged like a massive tsunami.

Then, around the year 2000, with the emergence of the iPhone, that tsunami finally crashed onto the shore, sweeping forward and overwhelming everything in its path. Now, the wave is receding, and we’re beginning to see the consequences.

We can observe the negative changes affecting Generation Z and the generation before it, the millennials. Metrics like human intelligence, happiness, and satisfaction are declining. Shockingly, 30 to 40 percent of the developed world now struggles with depression and anxiety. More people are taking their own lives per capita than ever before.

In fact, as I mention in my book, more people die by suicide today than from all wars, terrorist acts, and accidents combined. We are no longer a happy species, and that’s what my book explores—the reasons behind this profound shift and what it means for our future.

WHY? WHO?

What do you think is the ultimate goal behind this societal regression and the overwhelming influence of digital tools and technology?Do you believe it’s being done deliberately, on purpose? If so, why? And who are the individuals or groups that might be driving this agenda ?

Everything we do as humans is deliberate. In some cases, that deliberation is driven by one factor; in others, it’s driven by something entirely different.

The Holocaust was deliberate. The rise of digital technology was also deliberate—and initially, it was well-intentioned. However, the deliberate exploitation of that technology is another matter entirely. Many of the pioneers behind these advancements, including artificial intelligence, either restrict its use for their own children or have signed petitions warning against its dangers. Yet, they continue to push forward, ensuring that the rest of us remain addicted to it.

This is what’s known as the human condition. In the past, people used terror, murder, and political oppression to amass wealth, gain power, and control populations. Today, they use digital technology to achieve the same goals

It is the owners of digital technology who are using it to exploit us. And because it is addictive and has created a pervasive perception in society, everyone else is refusing to step aside. To such an extent that digital technology and its structures are now erasing all traditional forms of human organization. It is rendering democracy irrelevant. It is making government irrelevant.

Look at what is happening in the United States. The world’s most dangerous individual, Donald—what’s his name—Elon Musk, is now aligning himself with a psychopathic president to dominate the planet.

I assume these “bad” guys are collaborating with some of your colleagues, correct? To get information, to access science and to develop technology to the level it has reached today.

My book ultimately offers, what I hope is, a logical answer to the question you just asked—essentially, what is going to happen. And, as with everything in life, there are only two options.

The first option is what you mentioned: that somehow there will be an internal reset. Whether it’s scientists, the general public, parents, or society as a whole, people will come to realize what’s happening and pull back. It would be similar to what happened with smoking cigarettes, for instance. There was a time when smoking was considered cool. I remember in all the old black-and-white movies from the 1930s, everyone smoked. When a woman entered a room, the men would rush to light her cigarette. Think of Marlene Dietrich—she’d pull out a cigarette, and everyone would scramble to light it for her.

Then, of course, the science emerged showing that smoking caused cancer and was harmful. But initially, nobody paid much attention to the science. Over time, however, societal perception shifted. People who smoked began to be seen as unpleasant—they smelled bad, their fingers turned yellow, and so on.

The women developed wrinkles, and that, in many ways, was seen as far more important.

My point is essentially this: it’s possible that instead of 100% of people using digital technology all the time, usage could drop to levels similar to smoking—somewhere between 17% to 27% of people might use it regularly, while the rest of us would use it occasionally or not at all. But that scenario is unlikely to happen because digital technology is addictive and ubiquitous—everyone is doing it.

Here’s the critical difference: we didn’t force children in grammar school to smoke a pack of cigarettes a day. That’s the problem. Today, we’re essentially forcing children to engage with digital technology from a very young age, and that’s why the situation is so dire.

That’s why the second possibility is more likely: a reset. Someone, somewhere, will pull the plug. This idea is explored in my book through what’s called the Fermi Paradox—if you’ve gotten to the end, you’ll see it’s a perfect analogy for where we are now. The paradox illustrates that there’s no other way this can end. Once you become dependent on a nonbiological product, you become extremely vulnerable to any changes in that product. And since you can’t control those changes, they will inevitably affect you negatively.

So, I’m afraid that by the year 2050, something monumental will happen. Metaphorically speaking, there will be a breaking point. There are simply too many forces at play in the world right now, and the trajectory we’re on is unsustainable.

And since we live in a connected world, it’s very easy for all of these factors to reach a flashpoint—what I call a breaking point—and for chaos to ensue.

I grew up in Manhattan in the sixties and seventies, when air conditioners were just becoming common. They were these big, boxy machines that people would stick in their windows to cool their homes during the summer. Around 1968 or 1970, there was an intense heatwave. Everyone plugged in their air conditioners and turned them on, overwhelming New York’s electricity grid. The result was a series of blackouts. The entire city went dark.

It took just six hours of Manhattan being without power for the governor to call in the army. Why? Because the city was in flames. People from Harlem began burning and looting, and the unrest spread throughout Manhattan. Six hours without electricity, and people reverted to primal behaviour—metaphorically speaking, they became like apes. Basic human instincts took over.

Now, imagine what would happen if, for just one day, the digital world collapsed. We’ve already seen a glimpse of this. A few months ago, a security upgrade caused Microsoft systems to fail, leaving hundreds of thousands of computers worldwide inoperable. That was just a partial collapse. What would happen if the entire digital infrastructure went down, even for a day? The consequences would be unimaginable.

Airlines wouldn’t be able to fly. Police forces wouldn’t be able to function. Ambulances wouldn’t be able to operate. Patients in hospitals would lose access to their records because paper records are no longer the norm. Imagine a doctor with a patient in front of them, unable to access their medical history. They wouldn’t know what medications the patient was taking, who they were, whether they’d had surgery, or anything else critical to their care.

Just think about it. The chaos would be unprecedented.

But did you see what happened just a few hours ago when TikTok was banned in the United States? Within those few hours, millions of people called 911 because they didn’t know what was happening. They became anxious, panicked even. Can you imagine the level of addiction we’re dealing with here? Are you aware that Russia has been conducting exercises to prepare for exactly this kind of scenario? They’re testing how to handle being disconnected—shut out from the digital world entirely. It’s a stark reminder of how vulnerable we are in this hyperconnected age.

It’s no longer about building a bunker to survive a nuclear bomb. Now, it’s about preparing for the possibility of having your digital accounts cancelled or being cut off from the digital world. How pathetic is that? It’s a stark reflection of how deeply dependent we’ve become on technology—where losing access to a digital platform feels like a crisis on par with existential threats.

Although what you say doesn’t sound very optimistic, I, unlike you, the agnostic, at least have on my side the belief that God manages both chaos and order, and being completely inhabited by Him I see the future more optimistically.

Professor, thank you for giving me such an interesting, complex and scientifically enlightening read through “The Rise and Fall of the Human Mind” and for this dialog. Because you spoke of the happiness generated through connections, I hope that at least through this journalistic “rapprochement” we will remain… connected and happier than we were before it…

And I too thank you for the opportunity to sound the alarm on addictions. If even one person we put on our minds, let alone save, our meeting has already made sense!

* Diana Beatrice Ionescu contributed at this interview.